ACL 2026 Main Conference

ACL 2026 Main Conference

Beyond the Final Actor: Modeling the Dual Roles of Creator and Editor for Fine-Grained LLM-Generated Text Detection

Abstract

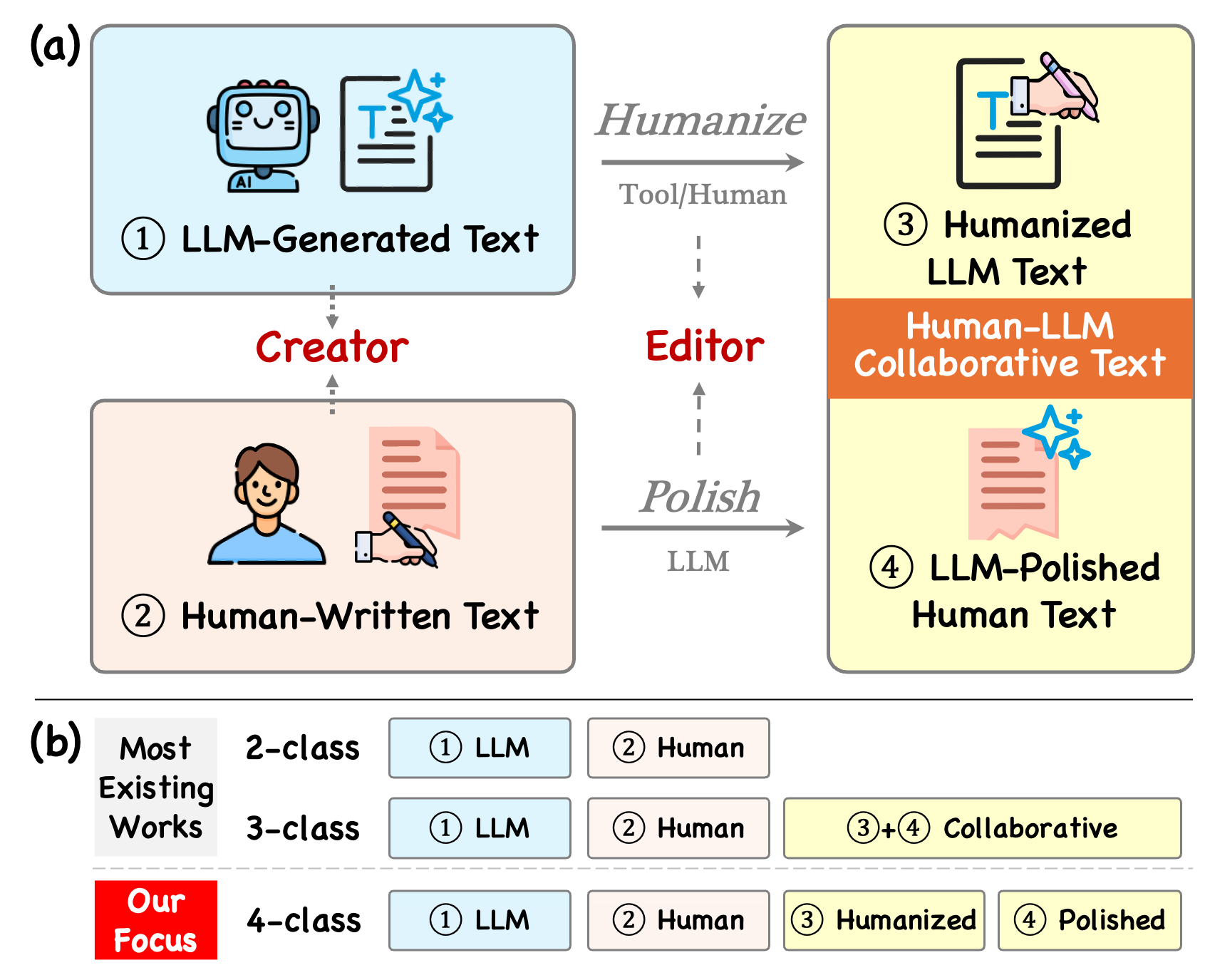

The misuse of large language models (LLMs) requires precise detection of synthetic text. Existing works mainly follow binary or ternary classification settings, which can only distinguish pure human/LLM text or collaborative text at best. This remains insufficient for the nuanced regulation, as the LLM-polished human text and humanized LLM text often trigger different policy consequences. In this paper, we explore fine-grained LLM-generated text detection under a rigorous four-class setting. To handle such complexities, we propose RACE (Rhetorical Analysis for Creator-Editor Modeling), a fine-grained detection method that characterizes the distinct signatures of creator and editor. Specifically, RACE utilizes Rhetorical Structure Theory to construct a logic graph for the creator's foundation while extracting EDU-level features for the editor's style. Experiments show that RACE outperforms 12 baselines in identifying fine-grained types with low false alarms, offering a policy-aligned solution for LLM regulation.

Preliminaries: Rhetorical structure provides a stable view of the creator's logical organization.

RACE builds on Rhetorical Structure Theory, which represents a document as a hierarchy of elementary discourse units linked by rhetorical relations such as Elaboration, Contrast, and Background. This structure captures how a text is organized rather than only how it is phrased.

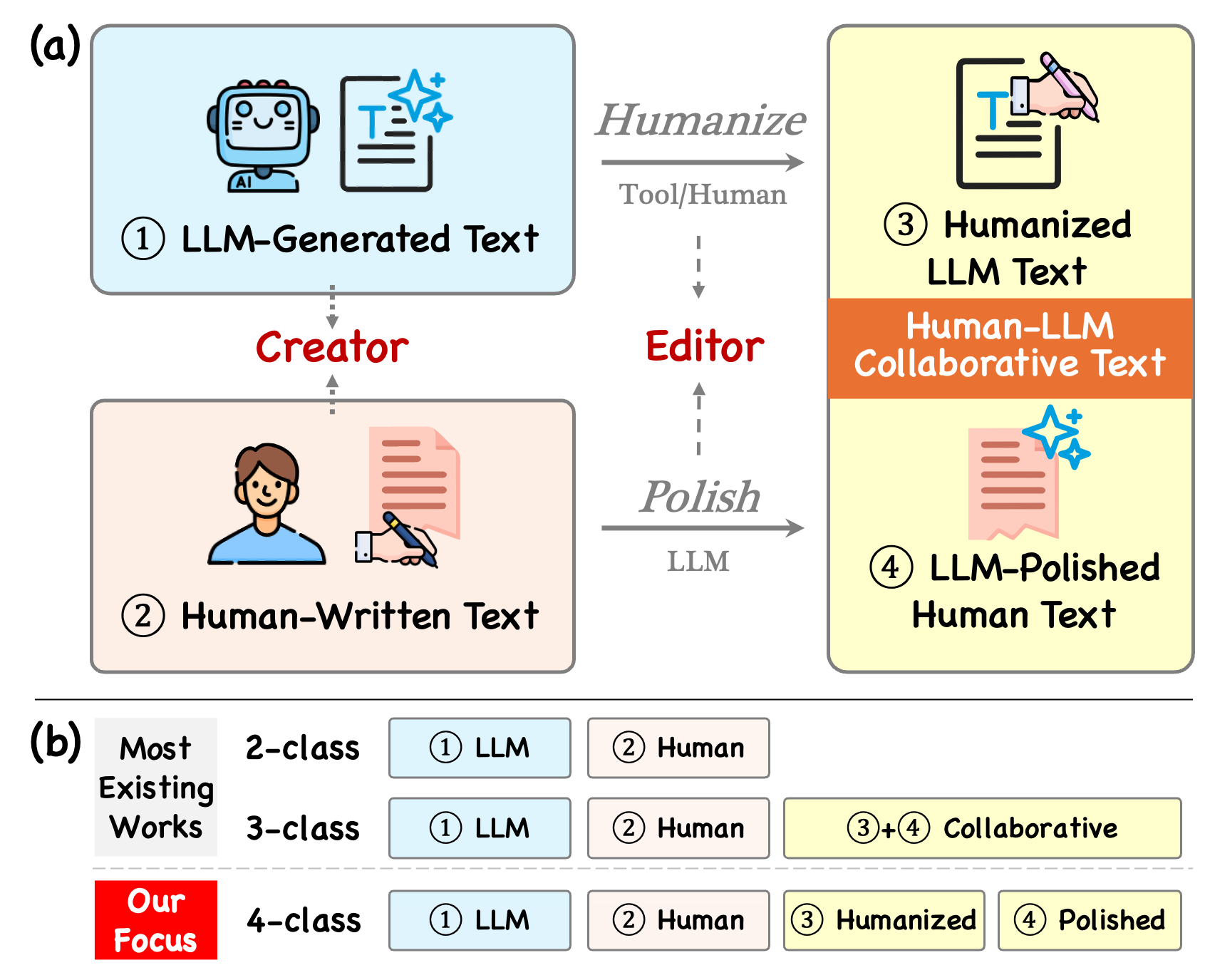

The motivating analysis shows that creator traits persist even after editing. Human-created texts tend to exhibit deeper logical hierarchies and stronger context-establishing relations, while LLM-created texts rely more on flatter, surface-level logical patterns. Those tendencies remain visible in polished and humanized variants, which supports explicit creator-editor modeling.

Distribution of RST relations. (a) Divergence of Creators: Human creators build deeper rhetorical hierarchies (e.g., Attribution, Background), whereas LLMs produce flatter structures relying on surface-level relations (e.g., Elaboration, Evaluation). (b) LLM-Polished: underlying human architecture persists. (c) Humanized: underlying LLM architecture persists.

Proposed Method: Rhetorical Analysis for Creator-Editor Modeling

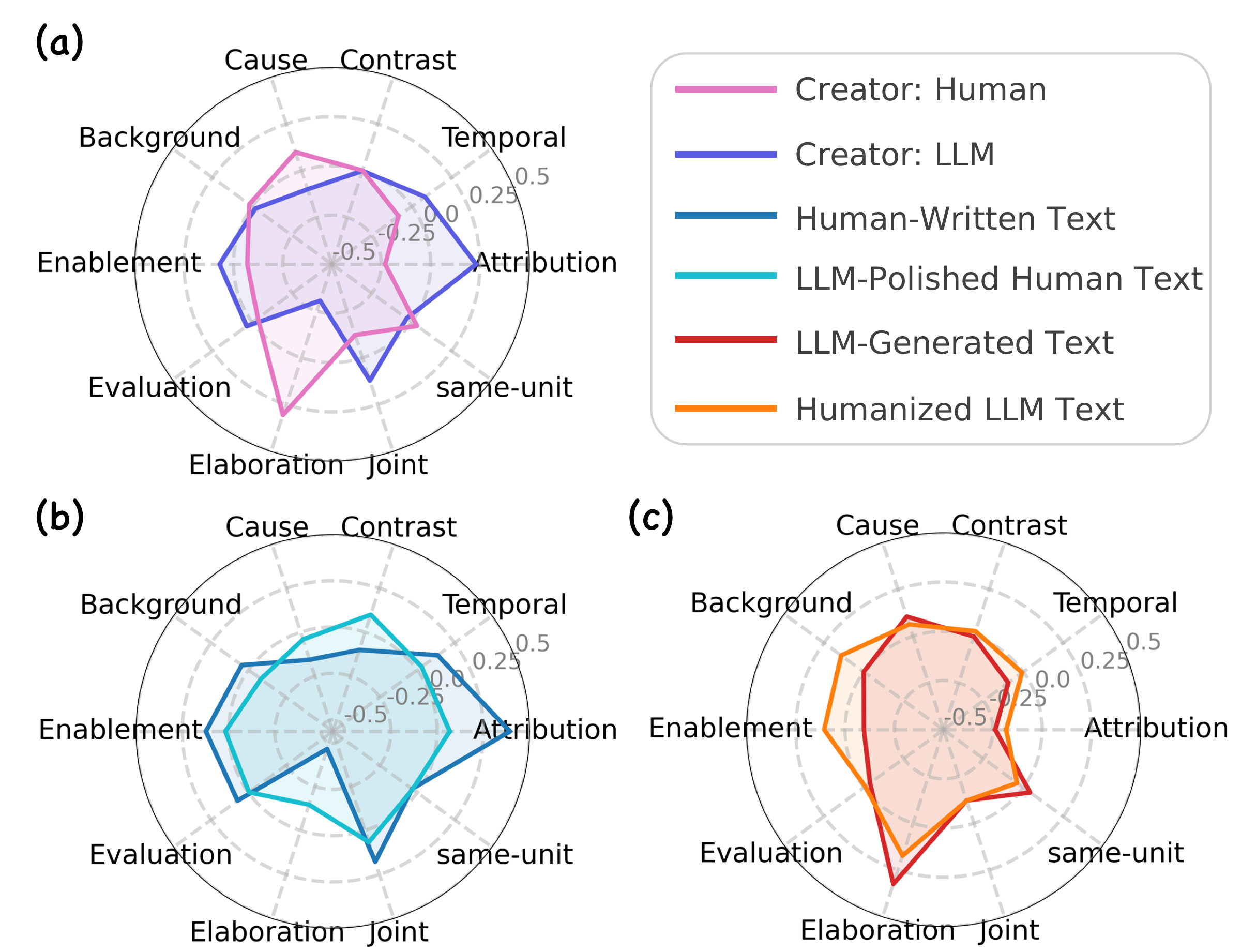

RACE extracts two complementary traces from each document. The editor trace comes from EDU-level representations that preserve local semantic and stylistic refinements. The creator trace comes from a rhetoric-aware graph built from the document's RST parse, which encodes logical dependencies between elementary discourse units.

The graph is initialized with descendant span pooling and a bottleneck projection so that relation nodes carry semantically meaningful yet compact structural signals. Rhetoric-guided message passing then propagates information with relation-specific transformations, allowing the model to capture distinct logical patterns beyond shallow lexical artifacts.

Finally, RACE reads out the root representation of the logical graph and predicts one of the four fine-grained labels. This design directly matches the paper's central claim: creator identity is most robustly reflected in logical organization, while editor identity is expressed through local wording choices.

Overall architecture of RACE. Given a text piece, RACE (a) first captures both creator and editor traces through rhetorical structure construction and elementary discourse unit extraction. (b) These dual traces are then transformed into a logic-aware graph, where both linguistic expression and logical organization signals are encoded into node features via descendant span pooling and relation-aware projection. (c) Next, Rhetoric-Guided Message Passing propagates information through relation-specific aggregation with basis decomposition to capture complex rhetorical dependencies. (d) Finally, the global text representation is obtained via root pooling for classification.

Experiments

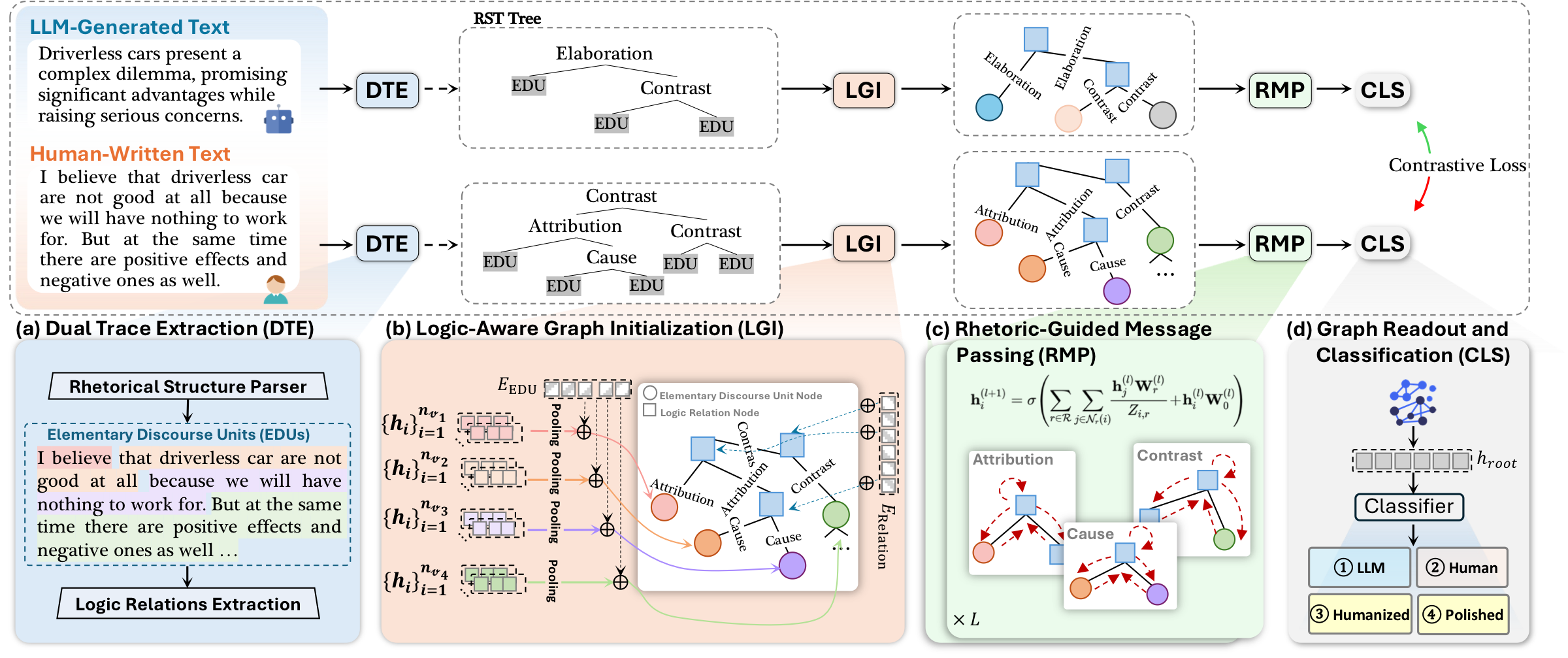

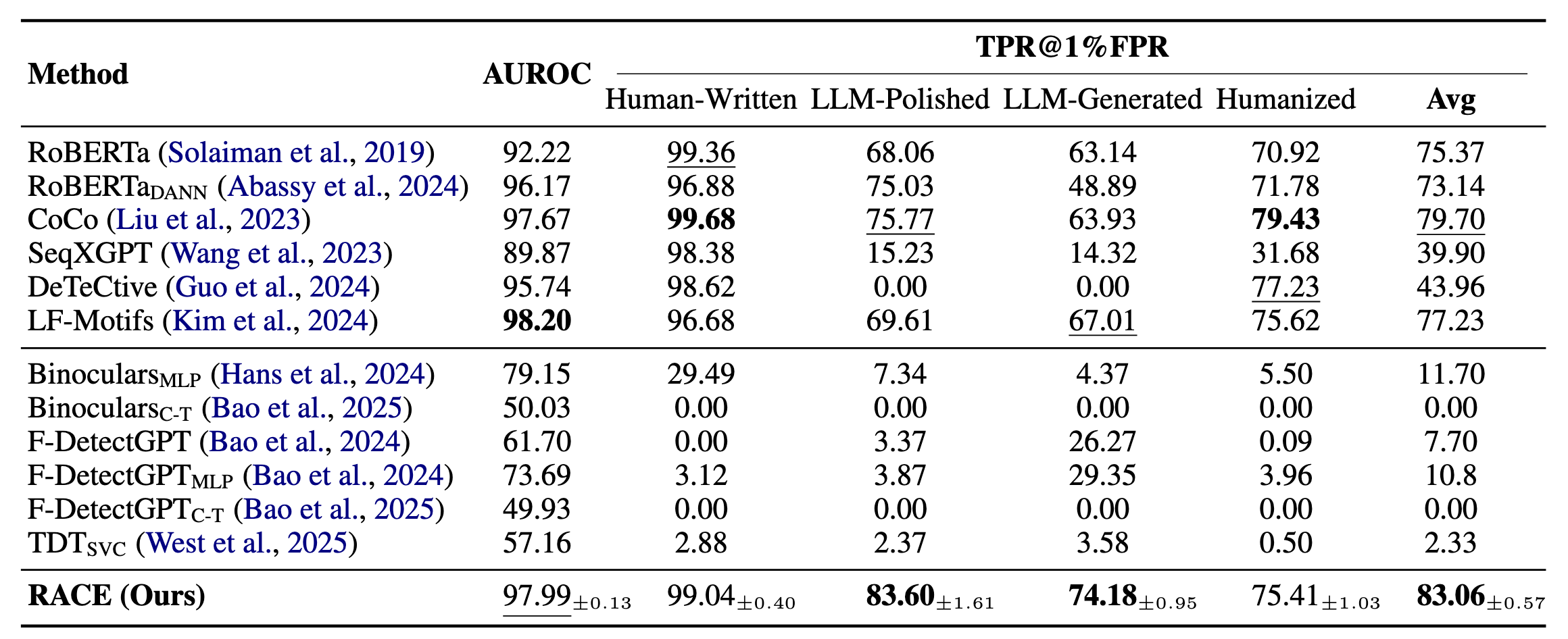

Experiments are conducted on a reorganized four-class split of HART, covering Human-Written, LLM-Polished, LLM-Generated, and Humanized text. The evaluation emphasizes macro AUROC and TPR@1% FPR, which prioritizes reliable ranking quality and strong recall under strict false-alarm control.

RACE is compared with 12 adapted baselines spanning learning-based and metric-based detectors. The main result is that explicit creator-editor modeling yields the strongest average TPR@1% FPR, with especially clear gains on the difficult LLM-Polished and LLM-Generated categories.

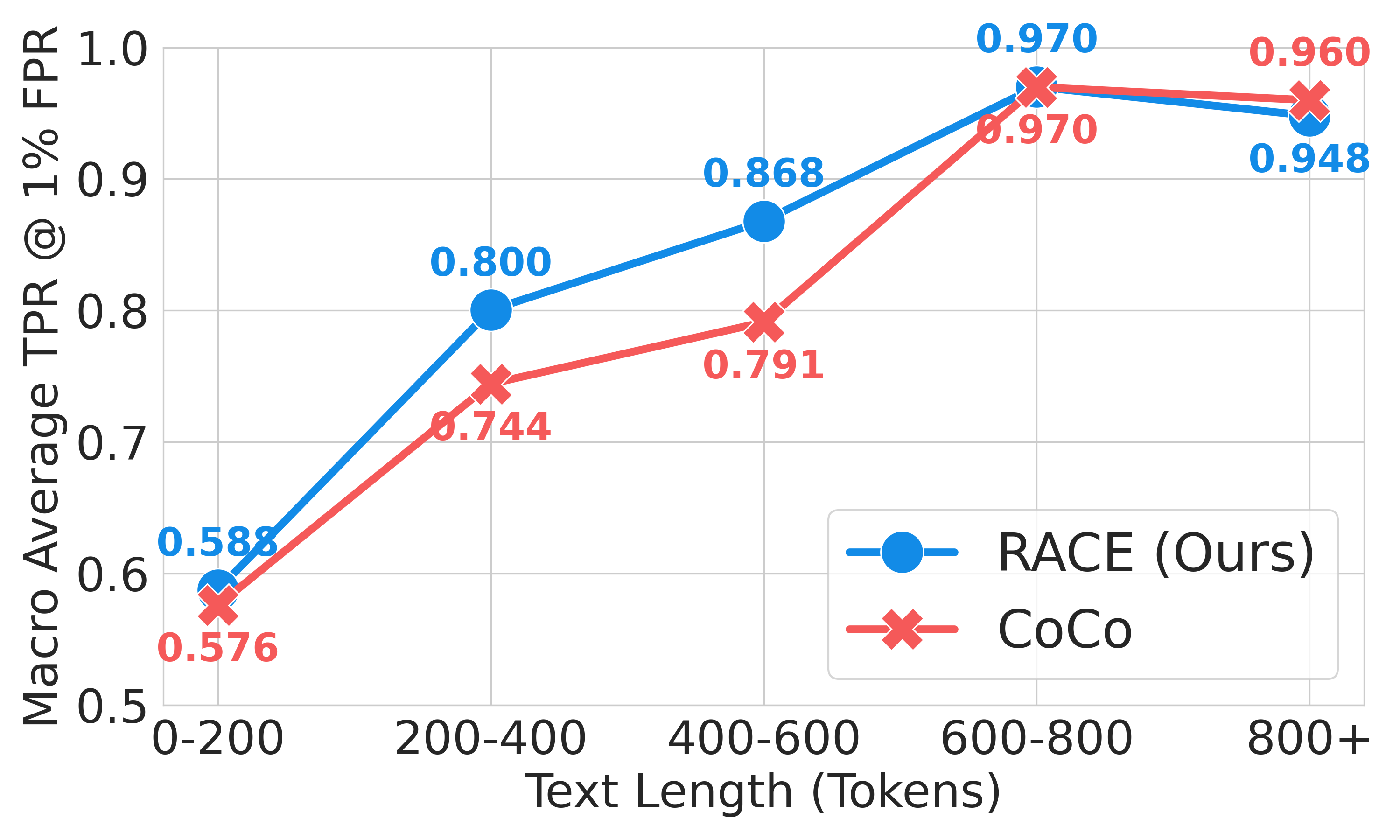

Ablation studies further show that rhetorical relations, contrastive learning, the bottleneck projection, and basis decomposition all contribute to the final behavior. Additional analysis indicates that RACE learns a more discriminative feature space, remains competitive under domain shift, and performs better than strong discourse-aware baselines on shorter inputs.

Quantitative comparison of detection methods under the 4-class setting. For RACE, we report the results across three runs using different seeds in the format of the mean ± std. Bold and underlined values denote the best and second-best performance, respectively.

Analysis of detection performance of CoCo and our proposed RACE across varying text lengths.

Conclusion

We explored the four-class setting in fine-grained LLM-generated text detection, to distinguish human-written text, LLM-generated text, LLM-polished human text, and humanized LLM text.

We modeled the dual roles of creator and editor through rhetorical structure construction and elementary discourse unit extraction, and designed the detector, RACE.

By building the logic-aware graph and performing rhetoric-guided message passage, RACE outperformed 12 baselines on the HART benchmark with a low false alarm rate.

BibTeX

Coming soon.